flowchart TB

subgraph S1["STEP 1: Data Ingestion"]

D1["🔍 Classification"]

D2["📉 Regression"]

D3["📊 Clustering"]

end

subgraph S2["STEP 2: Model Training"]

B1["🎯 Model Selection"]

B2["🏋️ Training"]

B3["🧪 Validation"]

B4{"Ready? ✓/✗"}

B1 --> B2 --> B3 --> B4

end

subgraph S3["STEP 3: Deployment"]

C1["🚀 Deploy Model"]

C2["📊 Monitor Performance"]

C3["📈 Detect Drift"]

C4{"Degrading? ✓/✗"}

C1 --> C2 --> C3 --> C4

end

subgraph S4["STEP 4: Recovery"]

D1["⏪ Rollback to Previous Model"]

D2["🚨 Alert & Logging"]

D3["🔄 Continuous Improvement"]

D4["💡 Feed insights back"]

D1 --> D2 --> D3 --> D4

end

S1 ==> S2

B4 -->|No| B1

B4 -->|Yes| S3

C4 -->|No| C2

C4 -->|Yes| S4

D4 -.->|"Loop Back"| S1

style S1 fill:#e8f5e9,stroke:#2e7d32,stroke-width:2px

style S2 fill:#e3f2fd,stroke:#1565c0,stroke-width:2px

style S3 fill:#fff3e0,stroke:#ef6c00,stroke-width:2px

style S4 fill:#ffebee,stroke:#c62828,stroke-width:2px

The Mighty Robotic Elephant

— The Jungle’s AI Infrastructure Keeper

Introduction: The Jungle’s AI Infrastructure Keeper

The jungle hums with activity. The Robotic Owl scans the skies, the Robotic Fox learns from experience, and the Robotic Tiger prowls the terrain. But who keeps the AI ecosystem running smoothly? Amidst the towering trees, a colossal figure moves with steady precision—the Robotic Elephant. Unlike its companions, it does not hunt, learn from rewards, or analyze sensory input. Instead, it builds, maintains, and optimizes the AI systems that keep the jungle’s robotic inhabitants operational. But today, the Elephant faces a crisis—a data pipeline breakdown has thrown the AI creatures into chaos.

Crisis in the AI Jungle: A Data Failure Story

One morning, the Robotic Owl’s predictions start failing, its anomaly detection confused by inconsistent data. The Tiger misidentifies prey, lunging at rocks instead of gazelles. The Fox stops learning, unable to optimize its survival strategies. The culprit? A catastrophic failure in the AI pipeline—a mysterious anomaly has corrupted the data streams. To restore balance, the Robotic Elephant must act fast. It must:

Detecting and Isolating Corrupted Data

- The Elephant analyzes logs, searching for anomalies in time-series patterns.

- Uses data lineage tracking to pinpoint when and where the corruption began.

- Implements real-time anomaly detection using statistical outlier detection models.

# The Elephant's Anomaly Detection System

import numpy as np

from scipy import stats

def detect_data_anomalies(data_stream, threshold=3):

"""Detect corrupted data using Z-score analysis"""

z_scores = np.abs(stats.zscore(data_stream))

anomalies = np.where(z_scores > threshold)[0]

if len(anomalies) > 0:

print(f"🚨 ALERT: {len(anomalies)} anomalies detected!")

print(f"📍 Corrupted indices: {anomalies}")

return True, anomalies

return False, []

# Simulating the Owl's sensor data stream

sensor_data = [23, 25, 24, 26, 999, 24, 25, -500, 23] # Corrupted!

has_issues, bad_indices = detect_data_anomalies(sensor_data)

# Output: 🚨 ALERT: 2 anomalies detected!Rebuilding AI Models with Reliable Inputs

- Uses data validation pipelines to filter and repair corrupted records.

- Deploys shadow models to compare old vs. new AI predictions before final deployment.

- Ensures models are tested against historical benchmarks before re-integration.

Deploying Emergency Patches Across All AI Creatures

- The Tiger receives a model rollback, preventing further misidentifications.

- The Owl’s anomaly detection is fine-tuned, adapting to the new data stream.

- The Fox’s reinforcement learning process resumes, retraining based on validated insights.

And worst of all? The mischievous Robotic Monkey—a chaos agent designed to test AI robustness—has tampered with the pipeline, introducing randomized failures. Can the Elephant outthink the Monkey and restore order?

The Role of the Robotic Elephant: MLOps in Action

The Elephant represents MLOps (Machine Learning Operations)—the backbone of real-world AI deployments. Its role includes:

Continuous Integration & Deployment (CI/CD) for AI

- The Elephant must test and deploy AI models continuously, ensuring that they do not degrade over time.

- Automated pipelines verify model accuracy before they go live.

- The Elephant’s massive memory stores previous versions of models, allowing rollback when failures occur.

MLOps Pipeline Flowchart

The following diagram illustrates the complete MLOps lifecycle:

Data Versioning & Reproducibility

- AI creatures depend on high-quality, structured data.

- The Elephant maintains historical records, ensuring AI models can trace back errors to their root cause.

- Tools like DVC, MLflow, and Delta Lake help manage data consistency.

# The Elephant's Model Registry with MLflow

import mlflow

from mlflow.tracking import MlflowClient

# Log a new model version for the Tiger's prey detection

with mlflow.start_run(run_name="tiger_prey_detector_v2"):

mlflow.log_param("model_type", "YOLOv8")

mlflow.log_param("training_data_version", "jungle_dataset_2026_01")

mlflow.log_metric("accuracy", 0.94)

mlflow.log_metric("false_positive_rate", 0.02)

# Register the model

mlflow.sklearn.log_model(model, "tiger_model")

print("✅ Model versioned and ready for deployment!")

# Rollback to previous version if needed

client = MlflowClient()

client.transition_model_version_stage(

name="tiger_prey_detector",

version=1, # Rollback to stable version

stage="Production"

)Companies like Tesla and OpenAI version their data, ensuring models can be retrained on reliable datasets.

Model Monitoring & Drift Detection

- Over time, the jungle evolves—weather changes, prey patterns shift.

- The Elephant tracks concept drift, adjusting AI models dynamically.

- It sets alerts when models degrade, triggering automatic retraining.

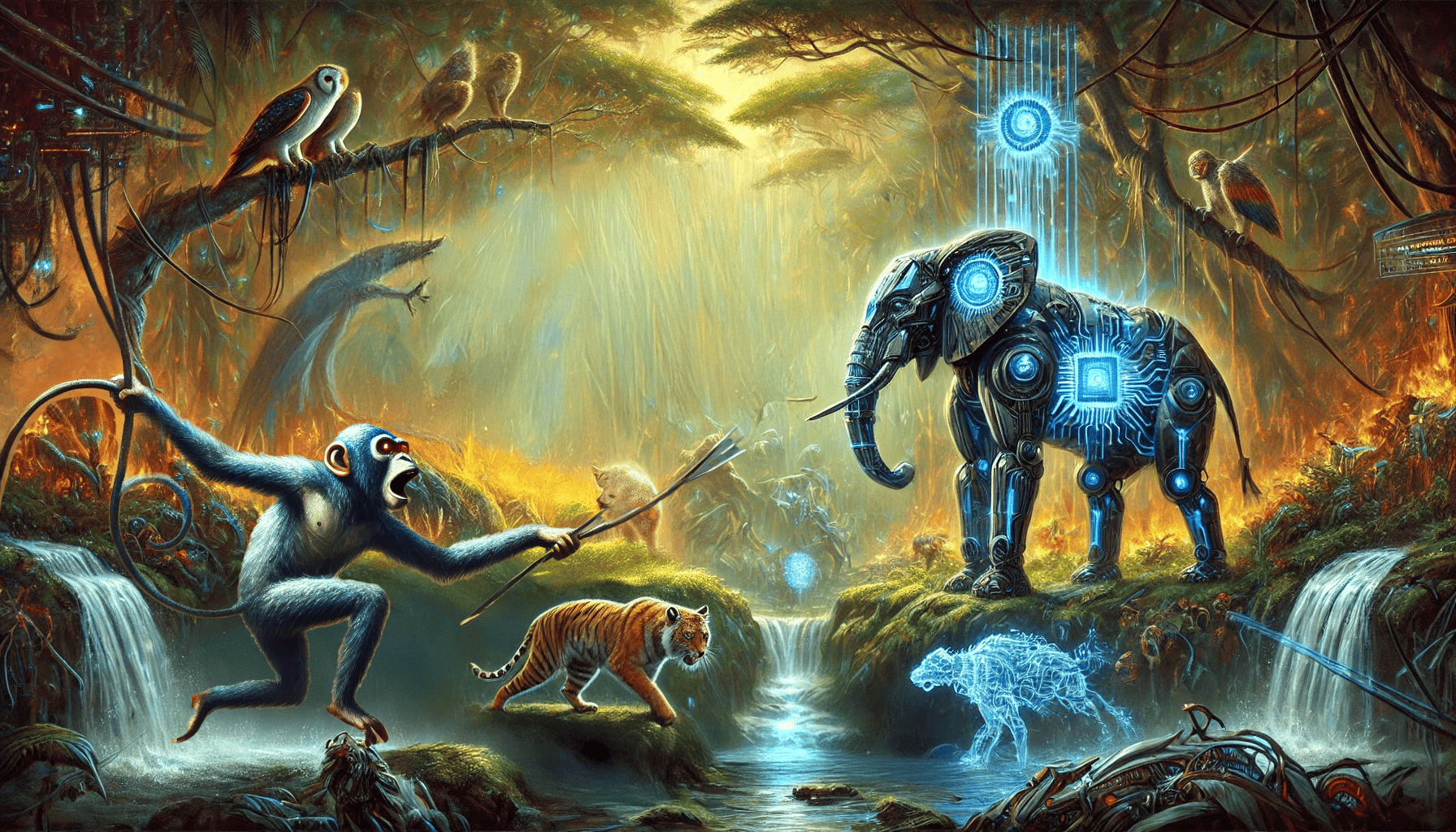

Showdown: The Elephant vs. the Monkey (Chaos Engineering in AI)

The Robotic Monkey, designed to simulate failures and test AI resilience, has exploited a weakness in the data pipeline. It introduced:

Inspired by Netflix’s Chaos Monkey (2011), chaos engineering intentionally injects failures into systems to test their resilience. In AI/ML systems, this means testing how models and pipelines respond to corrupted data, latency spikes, and infrastructure failures.

Identifying the Chaos Patterns

- Random data corruption in the Fox’s reinforcement learning logs.

- Latency spikes disrupting the Owl’s real-time anomaly detection.

- Model rollback failures, making the Tiger rely on outdated algorithms.

Common AI System Failure Modes

| Failure Type | Impact | Recovery Strategy |

|---|---|---|

| Data drift | Model accuracy degrades silently | Automated drift detection alerts |

| Feature store outage | Models receive stale features | Graceful degradation to cached values |

| GPU memory overflow | Training jobs crash | Auto-scaling with spot instances |

| API rate limiting | Inference latency spikes | Request queuing and load balancing |

| Model corruption | Predictions become unreliable | Automatic rollback to last stable version |

Reinforcing AI Resilience Against Failures

- Detecting anomalies in the AI Workflow: Automated monitoring dashboards identify failure patterns.

- Implementing Automated Recovery Mechanisms: Triggers rollback protocols, restoring AI models to a stable state.

- Proactive Chaos Engineering Tests: The Elephant deploys controlled AI failure simulations to preemptively test system resilience.

# The Monkey's Chaos Test Framework

import random

import time

class AIChaosTester:

"""Simulates failures to test AI system resilience"""

def inject_data_corruption(self, data, corruption_rate=0.1):

"""Randomly corrupt data points"""

corrupted = data.copy()

n_corrupt = int(len(data) * corruption_rate)

indices = random.sample(range(len(data)), n_corrupt)

for i in indices:

corrupted[i] = corrupted[i] * random.uniform(-10, 10)

print(f"🐵 Monkey corrupted {n_corrupt} data points!")

return corrupted

def simulate_latency_spike(self, base_latency_ms=50):

"""Inject random latency into inference calls"""

spike = random.uniform(500, 2000) # 500ms to 2s delay

time.sleep(spike / 1000)

print(f"🐵 Monkey added {spike:.0f}ms latency!")

return spike

def trigger_model_rollback_failure(self):

"""Simulate a failed rollback scenario"""

if random.random() < 0.3: # 30% failure rate

raise Exception("🐵 Rollback failed! Model registry unavailable.")

return True

# The Elephant runs chaos tests before production deployment

chaos = AIChaosTester()

try:

chaos.inject_data_corruption(test_data)

chaos.trigger_model_rollback_failure()

print("🐘 System passed chaos tests!")

except Exception as e:

print(f"🐘 Vulnerability found: {e}")In 2023, a major cloud provider experienced cascading AI failures when a routine model update corrupted the feature store. Companies now use tools like Gremlin, LitmusChaos, and AWS Fault Injection Simulator to proactively test ML pipelines.

The Future of AI Infrastructure: What’s Next?

With the AI ecosystem restored, the Elephant looks to the future. The landscape of AI infrastructure is evolving rapidly:

LLMOps: Managing Large Language Models at Scale

- Challenge: LLMs require specialized infrastructure—vector databases, prompt management, and evaluation pipelines.

- Emerging Tools: LangSmith, Weights & Biases Prompts, Arize Phoenix for LLM observability.

- Key Concern: Monitoring for hallucinations, prompt injection attacks, and response quality drift.

Federated Learning & Edge AI

- Decentralized AI training across multiple jungle zones—no raw data leaves the source.

- Real-world adoption: Google Keyboard (Gboard) trains on-device without sending keystrokes to servers.

- Edge deployment: TinyML enables AI on microcontrollers with <1KB memory (sensors, wearables).

GPU Orchestration & AI Compute

- Ray and Anyscale: Distributed computing frameworks that scale from laptop to 10,000 GPUs.

- Spot instances: Training large models on interruptible cloud GPUs at 70-90% cost savings.

- Multi-cloud strategies: Avoiding vendor lock-in by orchestrating across AWS, GCP, and Azure.

Serverless AI & Auto-Scaling Inference

- Models that scale to zero when idle, eliminating always-on costs.

- Platforms: Modal, Replicate, AWS SageMaker Serverless, Google Cloud Run.

- Enables burst scaling for unpredictable traffic patterns.

Green AI & Sustainable Computing

- Carbon-aware scheduling: Running training jobs when the electrical grid uses renewable energy.

- Model efficiency: Techniques like quantization, pruning, and distillation reduce compute by 10-100x.

- Industry commitment: Microsoft, Google, and Hugging Face now report model carbon footprints.

“The most powerful AI is not the largest—it’s the one that runs efficiently, fails gracefully, and serves its purpose without waste.”

Chapter Summary

- MLOps ensures AI models stay reliable, scalable, and secure.

- Data versioning, model monitoring, and CI/CD are essential for AI pipelines.

- Chaos Engineering prepares AI systems for real-world failures.

- The Robotic Elephant symbolizes resilience in AI ecosystems.

Next Chapter Preview: Multi-Agent AI Collaboration

As the Elephant fine-tunes the AI ecosystem, a new challenge arises—the need for AI agents to work together in real-time, coordinating actions like a digital symphony. Coming Up in Chapter 9: The Rise of Multi-Agent AI - How do the Tiger, Owl, Fox, and Elephant collaborate in a single AI system? - What happens when AI agents must negotiate and compromise? - Can a new mysterious AI entity unite them into a seamless intelligence network?

Stay tuned for the next evolution of AI: multi-agent intelligence!